Weakly-supervised Visual Grounding of Phrases with Linguistic Structures

Presenting in CVPR 2017

People

Abstract

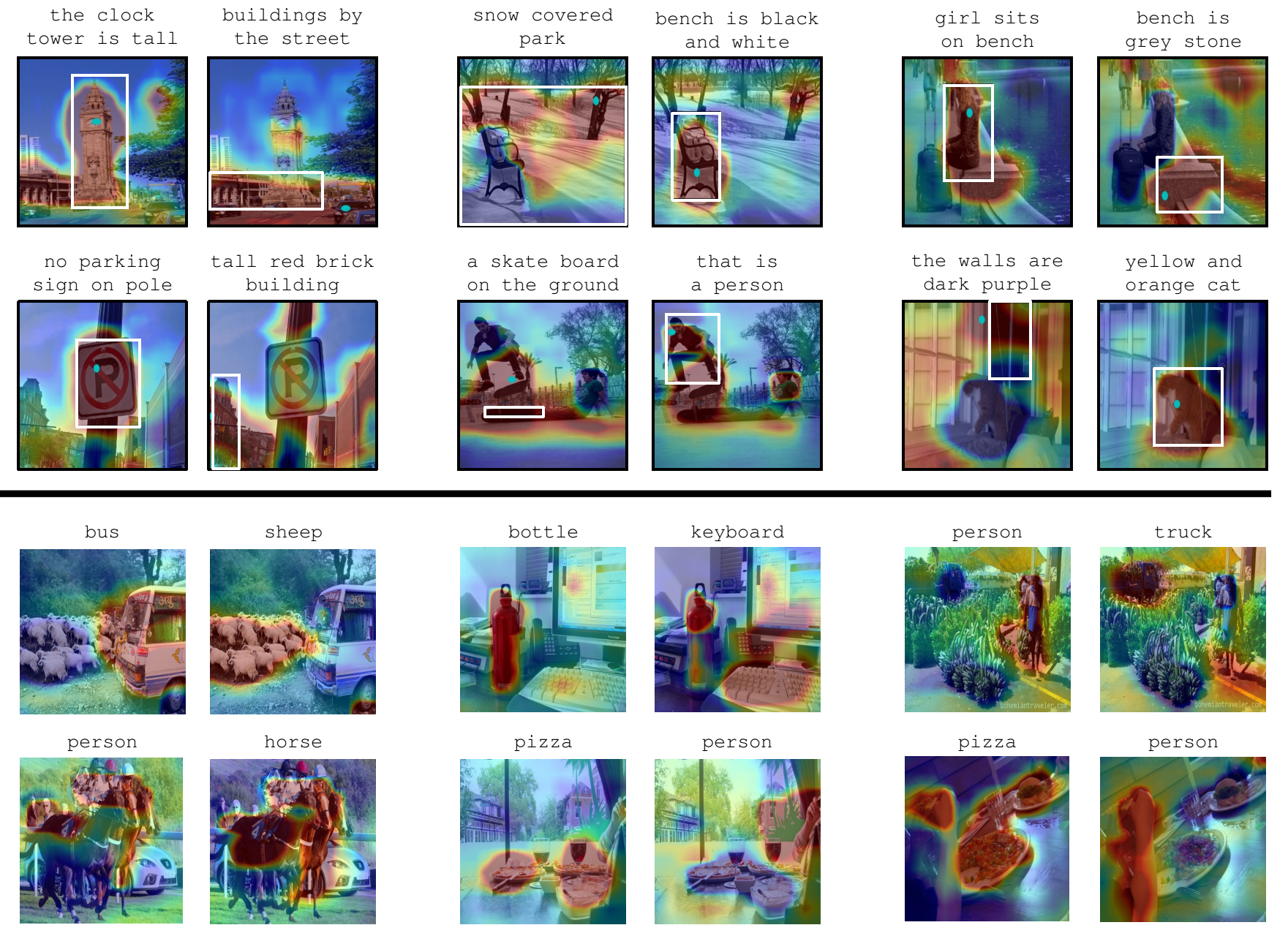

We propose a weakly-supervised approach that takes image-sentence pairs as input and learns to visually ground (i.e., localize) arbitrary linguistic phrases, in the form of spatial attention masks. Specifically, the model is trained with images and their associated image-level captions, without any explicit region-to-phrase correspondence annotations. To this end, we introduce an end-to-end model which learns visual groundings of phrases with two types of carefully designed loss functions. In addition to the standard discriminative loss, which enforces that attended image regions and phrases are consistently encoded, we propose a novel structural loss which makes use of the parse tree structures induced by the sentences. In particular, we ensure complementarity among the attention masks that correspond to sibling noun phrases, and compositionality of attention masks among the children and parent phrases, as defined by the sentence parse tree. We validate the effectiveness of our approach on the Microsoft COCO and Visual Genome datasets.

Paper

|

|

Additional materials

Press coverage

Acknowledgments

A big thanks to Dave, who has been super responsive and helpful for any of our system issue!

This research was supported partially by NVidia hardware grant

Comments, questions to Fanyi Xiao